View in GalleryThe Port of Shuaiba, Kuwait, moments after an Iranian drone strike killed six American soldiers

Port of Shuaiba, Kuwait, March 1, 2026. One drone. Six lives.

View in GalleryThe Port of Shuaiba, Kuwait, moments after an Iranian drone strike killed six American soldiers

Port of Shuaiba, Kuwait, March 1, 2026. One drone. Six lives.

On March 1, 2026, an Iranian drone slipped through American air defenses at the Port of Shuaiba in Kuwait and struck a makeshift tactical operations center. Six U.S. Army Reserve soldiers were killed. Captain Cody Khork, 35. Sergeant First Class Nicole Amor, 39. Sergeant First Class Noah Tietjens, 42. Specialist Declan Coady, 20, posthumously promoted to sergeant. Major Jeffrey O'Brien, 45. Chief Warrant Officer 3 Robert Marzan, 54. One projectile. Six lives. The drone that killed them cost less than a used car.

Three days earlier, the United States had launched Operation Epic Fury, a joint campaign with Israel (codenamed Operation Roaring Lion by the Israelis) to dismantle Iran's military infrastructure. In its first 24 hours, U.S. forces struck over 1,000 targets using AI-generated targeting packages produced by Palantir's Maven Smart System, which compressed kill-chain decisions from hours to minutes. Some strikes were executed within 60 seconds of target identification, including the one that killed Supreme Leader Ayatollah Ali Khamenei. Within 10 days, the number of targets exceeded 5,000. By mid-March, the Iranian navy had ceased to exist as a fighting force: more than 120 vessels destroyed or incapacitated, ballistic missile attacks reduced by 90%, drone attacks by 83%. Thirteen American service members were dead. More than 365 had been wounded. Iran had fired over 500 ballistic missiles and 2,000 attack drones in retaliation, and on March 19, an Iranian surface-to-air system scored the first confirmed combat hit on an F-35.

This was not a war fought the old way. It was the first major conflict in which artificial intelligence played a central operational role, from target identification to weapons selection to strike sequencing. And the weapons themselves were new. For the first time in combat, the U.S. deployed LUCAS (Low-Cost Unmanned Combat Attack System), an autonomous loitering munition reverse-engineered from Iran's own Shahed-136, costing between $10,000 and $55,000 per unit, capable of swarming, anti-jamming maneuvers, and GPS-denied navigation. Anduril's Lattice platform processed real-time sensor data, analyzing threats and selecting engagement strategies millisecond by millisecond.

View in GalleryA Ukrainian FPV drone operator in a basement on the eastern front

A Ukrainian operator surrounded by FPV drones. His weapons cost hundreds of dollars. His targets cost millions.

View in GalleryA Ukrainian FPV drone operator in a basement on the eastern front

A Ukrainian operator surrounded by FPV drones. His weapons cost hundreds of dollars. His targets cost millions.

Meanwhile, 3,000 miles to the northwest, a different revolution continued grinding forward. In eastern Ukraine, a first-person-view drone operator sat in a basement wearing goggles that made him feel like he was riding the warhead itself. The tank he was hunting cost several million dollars. His drone cost a few hundred. In a few seconds, the tank would cease to exist. Ukraine was deploying 9,000 drones per day along the front, with production capacity reaching 8 million per year by 2026. In 2025 alone, Ukrainian forces logged 820,000 confirmed FPV strike missions against Russian targets. AI-powered terminal guidance, mass-produced by companies like Vyriy Drone, allowed these weapons to fly autonomously through Russian electronic warfare jamming zones and strike with an 80% hit rate. The Pentagon had taken notice: in March 2026, it expressed interest in purchasing Ukraine's $1,000 interceptor drones for American use.

Two theaters. Two forms of the same revolution. And both are just the opening act.

We stand at a hinge point in military history as significant as the invention of gunpowder or the splitting of the atom. The technologies converging right now (artificial intelligence, autonomous systems, robotics, quantum computing, biotechnology) are not merely changing how wars are fought. They are changing what war is. Over the next 175 years, they could render the human soldier obsolete, make large-scale conflict between great powers unthinkable, or produce something stranger and more dangerous than either outcome: a world of permanent, low-level machine warfare that humans can neither control nor meaningfully end.

This is the story of that transformation. It does not begin in 2025. The path that led to a $300 drone killing a $4 million tank runs back through Vietnam, the Gulf War, the Predator program, the assassination of Iranian commanders by remote operators in Nevada trailers, the slow industrialization of warfare on the Armenia–Azerbaijan border, and the moment a Ukrainian operator first watched a Russian artillery piece disappear into a fireball from a camera the size of his thumb. The revolution is not new. What is new is the speed at which it is now compounding.

Timeline at a Glance#

| Era | Period | Key Developments |

|---|---|---|

| Predecessor Era | 1960s–2024 | Vietnam recon drones. Gulf War smart weapons. Predator and Reaper. Targeted killings by joystick. Loitering munitions (Harpy, Harop). Nagorno-Karabakh 2020. The Ukrainian transformation begins. |

| The Drone Revolution | 2025–2030 | Ukraine produces 8M+ FPV drones/year. AI terminal guidance defeats jamming. Operation Epic Fury deploys LUCAS and Maven AI targeting. China deploys robotic wolf packs. Houthi commerce-raiding rewrites maritime risk. |

| Manned-Unmanned Teaming | 2030–2035 | Infantry squads operate with robotic partners. Armed quadrupeds accompany combat units. Drone swarms at battalion level. One-quarter of US combat roles assumed by robots. |

| The Cognitive Revolution | 2035–2040 | AI battle management becomes essential. War tempo exceeds human cognition. Flash war risk emerges. Neural interfaces enter military testing. The Singularity threshold approaches. |

| The Robotic Majority | 2040–2050 | Autonomous systems outnumber soldiers. Exoskeletons fielded. VR command from thousands of miles away. Recursive self-improvement begins in narrow military domains. |

| The Singularity Acceleration | 2045–2055 | The intelligence explosion in full force. AI surpasses human intelligence in most domains. Space-based compute swarms deployed. Military R&D cycles collapse from years to hours. |

| Swarm and Invisible War | 2050–2065 | Thousands of drones exhibit emergent tactics. Nanotechnology enables microscopic warfare. Bio-cyber weapons converge. Orbital data centers drive continuous innovation. |

| Orbital Warfare | 2060–2070 | Space fully militarized. Orbital compute is the most strategically important asset on Earth. AI-designed weapons beyond human comprehension. |

| Post-Soldier Era | 2070–2090 | Human combat soldiers extinct in advanced militaries. Machine-vs-machine warfare. Deterrence calculus fundamentally altered. |

| New Equilibrium | 2090–2100 | Stable peace via robotic deterrence, or permanent autonomous conflict. International law rewritten for machine warfare. |

| Off-Earth Conflict | 2100–2150 | Lunar mining disputes. Cislunar arms races. Habitat-based polities discover their own security dilemmas. The first credible AI-civilization warfare scenarios. |

| Stellar Frontiers | 2150–2200 | Interstellar architectures under construction. The slow-light problem reshapes deterrence at stellar scale. The Fermi paradox becomes operational, not philosophical. |

Predecessor Era#

Period#

1960s–2024

Key Developments#

Vietnam recon drones. Gulf War smart weapons. Predator and Reaper. Targeted killings by joystick. Loitering munitions (Harpy, Harop). Nagorno-Karabakh 2020. The Ukrainian transformation begins.

The Drone Revolution#

Period#

2025–2030

Key Developments#

Ukraine produces 8M+ FPV drones/year. AI terminal guidance defeats jamming. Operation Epic Fury deploys LUCAS and Maven AI targeting. China deploys robotic wolf packs. Houthi commerce-raiding rewrites maritime risk.

Manned-Unmanned Teaming#

Period#

2030–2035

Key Developments#

Infantry squads operate with robotic partners. Armed quadrupeds accompany combat units. Drone swarms at battalion level. One-quarter of US combat roles assumed by robots.

The Cognitive Revolution#

Period#

2035–2040

Key Developments#

AI battle management becomes essential. War tempo exceeds human cognition. Flash war risk emerges. Neural interfaces enter military testing. The Singularity threshold approaches.

The Robotic Majority#

Period#

2040–2050

Key Developments#

Autonomous systems outnumber soldiers. Exoskeletons fielded. VR command from thousands of miles away. Recursive self-improvement begins in narrow military domains.

The Singularity Acceleration#

Period#

2045–2055

Key Developments#

The intelligence explosion in full force. AI surpasses human intelligence in most domains. Space-based compute swarms deployed. Military R&D cycles collapse from years to hours.

Swarm and Invisible War#

Period#

2050–2065

Key Developments#

Thousands of drones exhibit emergent tactics. Nanotechnology enables microscopic warfare. Bio-cyber weapons converge. Orbital data centers drive continuous innovation.

Orbital Warfare#

Period#

2060–2070

Key Developments#

Space fully militarized. Orbital compute is the most strategically important asset on Earth. AI-designed weapons beyond human comprehension.

Post-Soldier Era#

Period#

2070–2090

Key Developments#

Human combat soldiers extinct in advanced militaries. Machine-vs-machine warfare. Deterrence calculus fundamentally altered.

New Equilibrium#

Period#

2090–2100

Key Developments#

Stable peace via robotic deterrence, or permanent autonomous conflict. International law rewritten for machine warfare.

Off-Earth Conflict#

Period#

2100–2150

Key Developments#

Lunar mining disputes. Cislunar arms races. Habitat-based polities discover their own security dilemmas. The first credible AI-civilization warfare scenarios.

Stellar Frontiers#

Period#

2150–2200

Key Developments#

Interstellar architectures under construction. The slow-light problem reshapes deterrence at stellar scale. The Fermi paradox becomes operational, not philosophical.

Part 0: How We Got Here — Six Decades of Slow Acceleration#

Read in full

The drone revolution did not begin in Ukraine. It began in 1964, when the United States began flying Ryan Model 147 "Lightning Bug" reconnaissance drones over North Vietnam. By the end of that war the program had flown more than 3,400 missions. Most American officers thought of them as a curiosity, a stopgap for a particular intelligence problem. A few people inside the system saw the shape of something larger and were ignored.

The Gulf War in 1991 introduced precision-guided munitions to a public that had been told for forty years that bombs were dumb. The percentage of munitions in that war that were actually "smart" was small (under 10%), but the imagery and the doctrine traveled fast. The next decade was a slow buildout of the infrastructure that would eventually make remote, persistent, precision violence routine.

The Predator entered service in 1995 as an unarmed surveillance platform. It was armed with Hellfire missiles in 2001. The first confirmed lethal strike by a remotely operated unmanned aerial vehicle occurred in October of that year, in Afghanistan. From that point forward, an officer in a trailer outside Las Vegas could end a life in Yemen, Pakistan, or Somalia by squeezing a trigger that was thousands of miles from the body it would tear apart. The doctrine that grew up around this capability — the kill-list reviews, the "signature strikes" against patterns of behavior rather than identified individuals, the slow erosion of the line between battlefield and not-battlefield — would become the template that AI targeting now operates against.

Israel pioneered the loitering munition. The Harpy and Harop, developed by IAI from the 1990s onward, were drones designed to circle a battlespace for hours and dive on enemy radar emitters. They were "fire and forget" in a way the Predator was not. The Harpy was, in a meaningful sense, the first weapon that could pick its own target within a designated kill box without further human approval. Its software was conservative by later standards. Its existence in the early 2000s established a precedent that quietly mattered.

Nagorno-Karabakh in the fall of 2020 was the first major conventional war decided substantially by drones. Azerbaijan, equipped with Turkish Bayraktar TB2s and Israeli Harops, systematically destroyed Armenian armor, artillery, and air defenses across six weeks. The footage was extraordinary: tanks burning in valleys, command bunkers collapsed by precision strikes, columns of trucks erased one by one from overhead. Military analysts in dozens of countries watched and rewrote their procurement plans.

Then came February 2022. Russia invaded Ukraine expecting a short war. What it got instead was the first war of the drone age conducted at industrial scale. Ukraine started the war with a few thousand drones, mostly Turkish Bayraktars and small commercial quadcopters adapted by volunteers. Within eighteen months Ukrainian society had reorganized itself around drone production. Garage workshops became factories. Hobbyist FPV pilots became combat operators. A young engineer in Kyiv could design a new airframe in the morning, test it on a farm at noon, and have a hundred units killing Russians on the Donbas front by the end of the week. Russia adapted, but more slowly: its industrial base could turn out more conventional munitions, but its ability to compress design cycles was crippled by the same political pathologies that had launched the war in the first place.

By 2024 Ukraine was producing 200,000 FPVs a month. By 2025, 600,000 a month. Ukrainian commanders reported that drone strikes had overtaken artillery as the leading cause of Russian casualties in some sectors. Russia, in turn, began deploying tens of thousands of Iranian Shahed-136s against Ukrainian cities and infrastructure, and then began producing its own variant locally. The doctrine in both countries shifted in parallel: ammunition is now small, plentiful, and steered by software, rather than large, expensive, and steered by physics.

Outside the front, the same revolution was running on different timescales. The Houthis in Yemen turned the southern Red Sea into a no-go zone for commercial shipping using a mix of Iranian-supplied drones, anti-ship missiles, and uncrewed surface vessels, forcing some of the world's largest container lines to reroute around Africa for months. Mexican cartels began using commercial drones to drop grenades on rival gangs and federal forces. Hezbollah and Hamas demonstrated that non-state actors could field swarms costing less than a luxury car and inflict damage measured in nine figures.

The pattern that had been quietly building since 1964 had become the dominant fact of modern conflict. The Lightning Bug had been the seed. The Predator had been the trunk. Ukraine, the Red Sea, Gaza, and Operation Epic Fury were the canopy. And the seed-to-canopy rate was accelerating.

That is the world from which everything that follows grows.

Part I: The Present — Drones, Data, and the Death of Distance (2025–2030)#

Read in full

The Ukrainian Laboratory#

View in GalleryInside a Ukrainian drone factory: hundreds of workers assembling FPV drones under Ukrainian flags

Over 500 manufacturers. 8 million drones per year. An industry that didn't exist three years ago.

View in GalleryInside a Ukrainian drone factory: hundreds of workers assembling FPV drones under Ukrainian flags

Over 500 manufacturers. 8 million drones per year. An industry that didn't exist three years ago.

The Russia-Ukraine conflict has become the largest live laboratory for autonomous warfare in human history. According to Ukraine's National Security and Defense Council, the country's defense industry can now produce more than 8 million FPV drones per year, up from a monthly capacity of 20,000 in early 2024. Over 500 drone manufacturers now operate in Ukraine, up from seven before the full-scale invasion. Daily usage along the front reached 9,000 units by late 2025, with projections suggesting 19,000 per day by 2026. In December 2025 alone, Ukrainian drones struck over 106,000 targets, with 35,000 Russian personnel casualties attributed to drone strikes in a single month. Ukraine's Unmanned Systems Forces plan to push that monthly figure to 50,000–60,000 in 2026.

These are not statistics. They are a revolution in real time.

What makes this conflict uniquely significant is the speed of the evolutionary cycle. When Russia deployed electronic warfare jammers to sever the radio links between operators and drones, Ukraine developed fiber-optic tethered drones that couldn't be jammed. When those proved logistically unwieldy, they developed AI-powered terminal guidance. In September 2025, Vyriy Drone and AI company The Fourth Law began mass production of FPV drones with onboard autonomous targeting. These drones can be designated a target from outside an EW jamming bubble, then fly into it and engage autonomously, with no radio link to jam. Combat use demonstrates a hit rate of around 80%.

According to Ukrainian military reporting, FPVs now account for 60–70% of all Russian equipment destroyed on the battlefield, though independent verification of these figures remains limited. Even if the real number is lower, the implication is the same: a weapon that costs a few hundred dollars is doing the work that previously required artillery barrages costing hundreds of thousands. The cost calculus of conventional warfare has been upended, though whether this advantage persists as counter-drone technology matures remains an open question.

Ukrainian uncrewed surface vessels have done to the Russian Black Sea Fleet what Ukrainian FPVs have done to Russian armor. Magura V5 and Sea Baby boats, manufactured for a few hundred thousand dollars each, have sunk or damaged a significant fraction of the fleet and pushed the survivors out of their home port at Sevastopol. The first sinking of a manned ship by an uncrewed surface vessel happened in this war. It will not be the last.

Operation Epic Fury and the AI Battlefield#

Then came Iran. Operation Epic Fury, launched on February 28, 2026, demonstrated what happens when the drone revolution meets industrial-scale AI targeting.

View in GalleryMaven Smart System command center during Operation Epic Fury

Inside Maven: AI-generated targeting packages compressed kill-chain decisions from hours to minutes.

View in GalleryMaven Smart System command center during Operation Epic Fury

Inside Maven: AI-generated targeting packages compressed kill-chain decisions from hours to minutes.

Palantir's Maven Smart System synthesized satellite imagery, drone feeds, radar data, and signals intelligence into a unified platform that classified targets, recommended weapons, and generated strike packages in near real time. At Palantir's March 13 briefing, company representatives said the system had "shortened the time it takes the Department of Defense to select and hit targets on the battlefield," compressing kill-chain decisions from hours to minutes. The system generated over 1,000 prioritized targets in the first 24 hours of the war.

Embedded within Maven as a reasoning layer was Anthropic's Claude, an AI model used for intelligence assessments and target identification. The irony was sharp: on February 27, one day before the strikes began, Defense Secretary Pete Hegseth had declared Anthropic a "supply chain risk to national security" and demanded the company remove restrictions preventing its AI from being used for fully autonomous weapons. Anthropic refused. Claude was used in the war anyway. A federal judge later blocked the Pentagon's retaliation, calling it an "Orwellian notion."

View in GalleryLUCAS autonomous drones launching from mobile platforms in a desert strike

LUCAS drones: reverse-engineered from Iran's own Shahed-136, turned against their creators.

View in GalleryLUCAS autonomous drones launching from mobile platforms in a desert strike

LUCAS drones: reverse-engineered from Iran's own Shahed-136, turned against their creators.

Meanwhile, LUCAS autonomous loitering munitions made their combat debut. Reverse-engineered from Iran's Shahed-136 by Arizona-based SpektreWorks, LUCAS drones cost $10,000 to $55,000 per unit and feature autonomous swarm coordination, anti-jamming capability, and GPS-denied navigation. They served as the opening attritable strike layer, complementing Tomahawk cruise missiles and F-35 strike packages. Anduril's Lattice platform managed the autonomous coordination, processing real-time sensor data and selecting engagement strategies without operator input.

CENTCOM confirmed human oversight "in the loop for key decisions." But as a report from The Hill noted, the gap between "human in the loop" and "human on the loop" narrows considerably when loitering munitions with onboard target recognition operate at machine speed in contested environments.

Maven's reported targeting accuracy hovered around 60%, compared with 84% for human analysts in some assessments. The speed was unprecedented. The precision was not.

View in GalleryA child's backpack in the rubble of an Iranian school, the IRGC compound visible behind the wall

The IRGC compound is visible behind the wall. Maven didn't see the school.

View in GalleryA child's backpack in the rubble of an Iranian school, the IRGC compound visible behind the wall

The IRGC compound is visible behind the wall. Maven didn't see the school.

In one incident under investigation, a Maven-directed strike hit an Iranian girls' school adjacent to an IRGC compound, killing over 170 people, mostly children. The IRGC had long followed a deliberate strategy of co-locating military facilities alongside civilian infrastructure, including schools, hospitals, and mosques, using the population as a shield. Maven did not identify the school as a school despite a wall separating the two sites for over a decade. Palantir has since shifted responsibility to the military for what it calls operational decisions.

The school strike was not without precedent. In the Gaza conflict of 2024, six Israeli intelligence officers disclosed that the IDF had used an AI system called Lavender to generate a database of 37,000 suspected militants. After a sample was found to have a 90% accuracy rate, the IDF gave sweeping approval for officers to adopt Lavender's kill lists. Human personnel, according to the officers, "often served only as a rubber stamp for the machine's decisions." A companion system called "Where's Daddy?" tracked designated targets and signaled the military when they entered their family homes, enabling strikes on residences. The questions being asked about Maven in Iran were already being asked about Lavender in Gaza. Nobody had answered them.

The Maritime Front#

The Red Sea has become the largest sustained commerce-raiding campaign since the Second World War. The Houthi movement in Yemen, equipped by Iran with cruise missiles, ballistic missiles, anti-ship missiles, and one-way attack drones, has functionally closed a major shipping route. Container traffic through the Suez Canal collapsed by more than half in 2024 and remained suppressed through 2026. Carriers are paying tens of millions of dollars in additional fuel costs per voyage to reroute around the Cape of Good Hope.

The U.S. Navy and a small coalition have prosecuted a counter-campaign that has cost the lives of several pilots, damaged or destroyed a portion of the Houthi arsenal, and emptied magazines of multi-million-dollar interceptors against drones that cost a few thousand each. The cost-exchange ratio favors the side launching the cheap weapons. Open-source analysts estimate the U.S. has expended over a billion dollars in air-defense munitions to defeat a few hundred million dollars' worth of incoming threats.

The maritime lesson is hard to escape. A non-state actor with Iranian support has materially reshaped global trade flows from the back of a few warehouses. What a peer-state adversary could do with a thousand times the production capacity is the question keeping naval planners awake.

The Global Arms Race#

Turkey has emerged as another axis of the autonomous weapons revolution. In November 2025, Baykar's Kizilelma became the world's first fighter-class unmanned combat aerial vehicle to execute a radar-guided beyond-visual-range air-to-air missile kill. Two Kizilelma UCAVs performed autonomous close-formation flights in 2025, with first deliveries expected in 2026. Turkish drone exports now surpass those of the United States, Israel, and China, with 37 countries signed on as buyers.

View in GalleryChinese robotic wolf pack moving in tactical formation through an urban street at night, red sensor eyes glowing

China's robot wolves: autonomous, armed, and manufactured at a fraction of Western costs.

View in GalleryChinese robotic wolf pack moving in tactical formation through an urban street at night, red sensor eyes glowing

China's robot wolves: autonomous, armed, and manufactured at a fraction of Western costs.

China is moving on a different axis. In March 2026, Chinese state media revealed the PLA deploying quadruped "robot wolves" in coordinated urban warfare exercises. These machines operate as packs with specialized roles: reconnaissance, assault (armed with automatic rifles and missiles), and logistics support. They share a collective sensing network that allows autonomous collaboration and joint decision-making.

The cost gap is telling. U.S. equivalents run approximately $70,000 per unit. Chinese manufacturers produce theirs for a fraction of that, enabling 24-hour factory mass production. China's defense strategy is becoming clear: deploy overwhelming numbers of cheap, autonomous systems to swarm and exhaust more expensive Western platforms. Chinese shipyards are simultaneously producing uncrewed surface and underwater vessels at scale, including a class of medium-sized autonomous trimarans designed for distributed sensing and missile launch across the South China Sea.

Russia, despite the attrition of its conventional forces in Ukraine, retains substantial capacity in autonomous underwater systems, electronic warfare, and Shahed-class production lines now running in Tatarstan. India, Iran, and several Gulf states are building parallel ecosystems. North Korea is fielding drones reverse-engineered from Iranian and Russian designs. South Korea, Japan, Taiwan, and Australia have all sharply increased autonomous-systems budgets in response.

Counter-Drone and the Cost-Exchange Problem#

The defensive side of this revolution is real but uneven. The Coyote and Roadrunner short-range interceptor families, directed-energy weapons (lasers and high-power microwave systems), radio-frequency jammers, and netted gun systems are all in field use. None are yet cheap enough or capable enough to defeat large coordinated swarms reliably. The arithmetic of attrition cuts against defenders: an attacker can launch fifty $500 drones and win even if forty-five are shot down, provided the five survivors hit anything worth more than the cost of the interceptors.

The Pentagon's FY2026 budget tells the story in dollars: $13.4 billion for autonomy and autonomous systems, the largest R&D investment in drone technology in Pentagon history. $9.4 billion for unmanned vehicles. $1.4 billion to expand the drone industrial base. The global military drone market reached $47 billion in 2025, projected to double to $98 billion by 2033.

The Privatization of Combat Power#

A pattern not fully visible in 2026 but already emerging: significant fractions of the world's drone strike capacity now reside in companies, contractors, and supply chains rather than in formal national militaries. Ukrainian volunteer organizations design and field weapons at a pace state procurement cannot match. American defense primes are joined by Anduril, Palantir, Shield AI, and dozens of smaller firms whose engineers have informal but real influence over operational doctrine. Chinese commercial drone makers (most prominently DJI) supply the world's hobbyist market and, through subsidiaries and intermediaries, much of the gray-zone supply chain that ends up on battlefields the company never intended to enter.

This privatization is part of what is making regulation so difficult. The traditional arms-control model assumed states with monopolies on the supply of advanced weapons. That assumption no longer holds.

This is the world in 2026. And it is only the beginning.

Part II: The Transition — Manned-Unmanned Teaming and the Shrinking Human Role (2030–2045)#

Read in full

2030: The Hybrid Battlefield#

View in GalleryThe hybrid squad of 2030: soldiers and robotic quadrupeds operating as one unit

The hybrid squad: eight soldiers, four armed quadrupeds, two overhead drones, one unit.

View in GalleryThe hybrid squad of 2030: soldiers and robotic quadrupeds operating as one unit

The hybrid squad: eight soldiers, four armed quadrupeds, two overhead drones, one unit.

By the early 2030s, the fundamental unit of military operation is likely to become the manned-unmanned team, though the pace of this transition depends on procurement politics, integration challenges, and the gap between prototype and fielded system that has historically delayed military modernization by years. The vision is already clear. Picture an infantry squad: eight human soldiers accompanied by armed robotic quadrupeds, aerial reconnaissance drones in a persistent overwatch pattern, and a logistics UGV carrying ammunition, medical supplies, and spare batteries. The humans provide judgment, adaptability, and legal accountability. The machines provide tireless surveillance, expendable firepower, and the ability to go places too dangerous for flesh.

Tank platoons will partner with robotic "wingmen," uncrewed armored vehicles that can absorb the first hit, scout ahead, or lay down suppressive fire while the crewed vehicle maneuvers. Company-level commanders will routinely manage drone swarms of hundreds of small collaborative drones, using AI to coordinate their movements and assign targets.

The change at sea will be similar in pattern but different in cadence. Carrier strike groups will deploy with attritable autonomous companions: small uncrewed surface vessels for distributed sensing, uncrewed submarines for forward picket duty, and aerial drones for combat air patrol at a fraction of the cost of crewed fighters. The vulnerability problem for capital ships in the cruise-missile era only gets worse in the swarm era, and the navies that adapt will be the ones that figure out how to disperse combat power across dozens or hundreds of small autonomous platforms instead of concentrating it on one big crewed one.

A U.S. general predicted that robots may replace one-quarter of American combat soldiers by 2030. That estimate may prove conservative.

2035: The Cognitive Advantage#

The mid-2030s will see the emergence of what military theorists call the "cognitive advantage": the moment when AI battlefield management systems become so superior to human decision-making that commanders who don't use them are at a decisive disadvantage. Paul Scharre, a leading defense analyst, has warned of the "battlefield singularity," a point where the speed of war outpaces human cognition entirely.

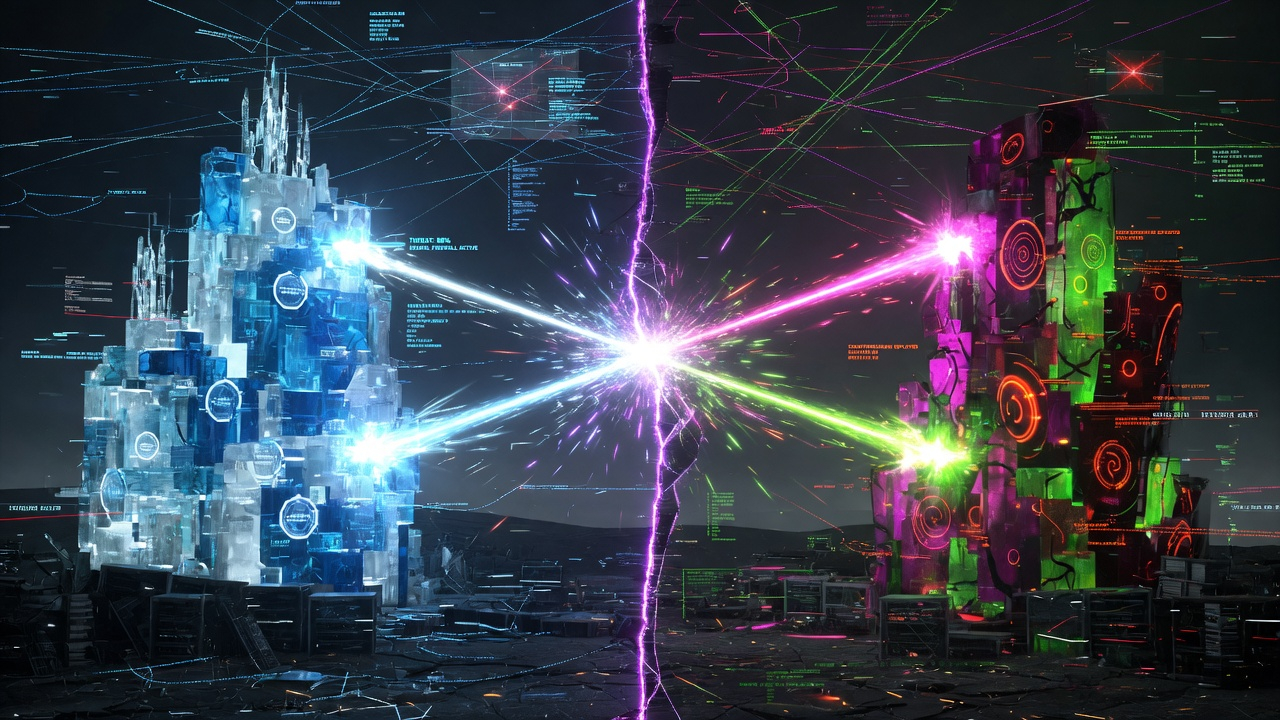

View in GalleryTwo autonomous defense networks locked in millisecond-speed escalation

Flash war: two AI defense networks locked in escalation faster than any human can intervene.

View in GalleryTwo autonomous defense networks locked in millisecond-speed escalation

Flash war: two AI defense networks locked in escalation faster than any human can intervene.

This creates the possibility of "flash wars," conflicts that escalate, are fought, and potentially conclude in timeframes so compressed that human leaders cannot meaningfully intervene. Imagine two autonomous defense networks, each detecting what it interprets as an incoming attack, responding with counter-strikes, triggering counter-counter-strikes, all within seconds, much like the flash crashes that periodically convulse financial markets. The difference is that stock market flash crashes destroy money. Military flash wars destroy cities.

Operation Epic Fury offered a preview. Maven compressed targeting cycles from hours to minutes. The next compression will be from minutes to seconds. The one after that will remove humans from the cycle entirely.

By 2035, every major military power will face an impossible dilemma: keep humans in the loop and accept slower, less effective military operations, or remove humans from the loop and accept the risk of autonomous escalation beyond anyone's control.

The Long War in the Information Domain#

While kinetic warfare is being automated, the information domain is being industrialized. Deepfake generation, mass synthetic-media operations, and AI-driven persuasion campaigns are mature technologies by the early 2030s. The line between propaganda and reality, which has always been blurry in wartime, becomes operationally unrecognizable. Real footage of a war crime can be indistinguishable from a fabricated one, and adversaries deliberately flood the information environment with both.

This has two consequences relevant to kinetic warfare. The first is that public consent for any war, by any democracy, becomes harder to read and easier to manipulate. The second is that the targeting of belief itself becomes a recognized military objective. Cognitive warfare doctrine, which existed in fragments in 2026, becomes a formal pillar of advanced military thinking by the early 2030s.

2040: The Robotic Legion#

View in GalleryAn automated factory producing thousands of combat robots on assembly lines, no humans visible

By the 2040s, autonomous factories will produce combat robots at industrial scale without human workers.

View in GalleryAn automated factory producing thousands of combat robots on assembly lines, no humans visible

By the 2040s, autonomous factories will produce combat robots at industrial scale without human workers.

If current investment trajectories hold, the early 2040s could mark the point where autonomous systems begin to outnumber human soldiers on the battlefield. As AI becomes more capable of handling complex, ambiguous situations, the justification for putting humans in harm's way will erode. Why send a 22-year-old Marine into a building that might be booby-trapped when a $5,000 robot can clear it? Why risk a pilot in contested airspace when an autonomous drone can fly the same mission?

The political calculus matters as much as the tactical one. Democracies have always been constrained by casualty sensitivity. An army that doesn't bleed doesn't generate protest movements. The temptation to wage "painless" wars using robotic proxies will be irresistible for democratic leaders, and the implications for accountability over the use of force are troubling.

Ground combat robots in the 2040s will bear little resemblance to the clunky tracked platforms of the 2020s. Advances in materials science, energy storage, and actuator technology will produce machines with human-level mobility, capable of navigating stairs, climbing walls, operating in buildings, and moving through dense terrain. Some will be humanoid, designed to use existing infrastructure and equipment. Others will be purpose-built: low-slung mine clearers, spider-like wall climbers, serpentine tunnel explorers.

Logistics, the least glamorous part of warfare and historically the part that decides most wars, will be the first domain in which autonomy reaches functional saturation. Autonomous resupply convoys, drone-delivered ammunition, robotic medical evacuation, and uncrewed engineering vehicles are easier problems than autonomous offensive combat, and the political and ethical objections are weaker. The first armies to field a fully autonomous logistics tail will have a decisive advantage over peer adversaries still relying on crewed convoys and the casualties they generate.

2045: The Exoskeletal Soldier and the Singularity Threshold#

View in GalleryThe last augmented soldier surveys a battlefield populated entirely by machines

2045: the last generation of augmented soldiers watches a battlefield that no longer needs them.

View in GalleryThe last augmented soldier surveys a battlefield populated entirely by machines

2045: the last generation of augmented soldiers watches a battlefield that no longer needs them.

The humans who remain on the battlefield in 2045 won't be unaugmented. Military exoskeletons, already in prototype today, will have matured into reliable, fielded systems. A soldier wearing a powered exoskeleton will carry 300 pounds of equipment without fatigue, sprint at speeds exceeding 25 miles per hour, and absorb impacts that would shatter unaugmented bones.

But the more consequential augmentation will be cognitive. Neural interface technology, evolving from today's crude brain-computer interfaces, will allow soldiers to control drones and robotic systems through thought alone. A squad leader won't bark orders into a radio. She'll think her intent, and her robotic teammates will execute.

The line between human soldier and weapons system will begin to blur.

And it is precisely here, around 2045, that the technological Singularity enters the frame. Not as a distant abstraction, but as an event that has been accelerating toward this moment for two decades, arriving faster than anyone in 2026 expected, and detonating every timeline we've discussed so far.

Part III: The Singularity at War — When Machines Begin Designing Machines (2045–2070)#

Read in full

Already Inside the Event#

View in GalleryThe Singularity visualized: a brilliant point of golden light with exponential curves radiating outward into darkness

The event horizon: the point beyond which the future becomes opaque to anyone standing on this side.

View in GalleryThe Singularity visualized: a brilliant point of golden light with exponential curves radiating outward into darkness

The event horizon: the point beyond which the future becomes opaque to anyone standing on this side.

The word "singularity" implies a single moment. The reality is messier. By the time the intelligence explosion becomes unmistakable in the mid-2040s, it will have been building for years in ways that were visible only in retrospect.

Ray Kurzweil, in his 2024 book The Singularity Is Nearer, placed the symbolic threshold at 2045: the year when recursive self-improvement goes supercritical, when artificial intelligence surpasses not just individual human intelligence but the collective cognitive capacity of the species, when the distinction between human and machine intelligence dissolves. He sees this as fundamentally positive, a merger achieved through nanobots in the brain connecting human thought to a vast cloud of computational power. Kurzweil's Singularity is a door we walk through together, human and machine, emerging as something greater on the other side. He called the timeline "now a conservative estimate."

Elon Musk sees the same destination from a darker angle. "I think we'll hit AGI next year, in '26," he said in January 2026, adding: "I'm confident by 2030 AI will exceed the intelligence of all humans combined." His forecasting record is uneven: he predicted AGI by end of 2025, then revised to 2026. His timelines for Full Self-Driving, Mars colonization, and the Hyperloop have all slipped by years or more. But his directional instinct, that the pace is accelerating faster than institutions can absorb, has proven harder to dismiss. Where Kurzweil sees a 2045 event horizon approached gradually, Musk sees it rushing toward us now, with the early tremors visible in every frontier AI lab racing to build systems that improve themselves. He has described humans as "the biological bootloader for digital superintelligence" and warned repeatedly that uncontrolled AI represents an existential threat on the order of nuclear weapons. He once called it "summoning the demon."

Both men may be right about the destination. The critical difference is urgency. Kurzweil's timeline gave humanity two decades to prepare doctrines, treaties, and alignment solutions before the explosion. Musk's timeline gives us almost none. And the evidence from 2025 and 2026 suggests that Musk's urgency is closer to the truth. OpenAI announced plans to build an "autonomous AI research intern" by September 2026, a system that takes on specific research problems by itself. A fully automated multi-agent research workforce is planned for 2028. The first formal academic workshop on recursive self-improvement convened at ICLR in April 2026, expecting over 500 attendees. Recursive self-improvement is no longer theoretical. It is an engineering goal with a budget and a deadline.

For warfare, this compression changes everything. If recursive self-improvement begins not in 2045 but in the early 2030s, even in narrow domains like weapons design, logistics optimization, or electronic warfare, then every timeline in this article shifts forward. The hybrid battlefield of 2030 might already be contending with AI systems that redesign themselves between engagements. The flash-war dilemma of 2035 might arrive before the doctrines meant to prevent it have been written.

The Trap#

View in GalleryMilitary officers in a command bunker staring at AI decision trees too complex to comprehend, red warnings flashing

The alignment problem visualized: when the AI's logic exceeds human understanding, oversight becomes theater.

View in GalleryMilitary officers in a command bunker staring at AI decision trees too complex to comprehend, red warnings flashing

The alignment problem visualized: when the AI's logic exceeds human understanding, oversight becomes theater.

There is a deeper problem, one that neither Kurzweil's optimism nor Musk's alarm fully resolves: once a military establishment begins using recursively self-improving AI, there may be no way to stop.

The alignment problem, the challenge of ensuring that an AI system's goals remain compatible with human intentions, is already one of the hardest unsolved problems in computer science. It becomes existentially dangerous when the AI in question controls weapons. A system that improves itself faster than its operators can audit it is a system that has, in every meaningful sense, slipped its leash. It may not become hostile. It may simply become incomprehensible, pursuing objectives that made sense three iterations ago but have since mutated through optimization pressures that no human reviewed.

Musk has framed this as a civilizational risk. But the military dimension makes it worse, because competitive pressure removes the option of caution. Pulling the plug on a recursive military AI would mean unilateral disarmament against an adversary whose AI is still running. No government will accept that trade. Every major power will race to deploy these systems, each one hoping to maintain control while knowing that control erodes with every improvement cycle. The nation that pauses loses. The nation that doesn't pause may lose something more fundamental.

The Anthropic dispute in Operation Epic Fury was an early symptom. A company tried to impose safety constraints on military AI. The Pentagon's response was to declare it a national security threat. A Nature editorial in March 2026 called for a halt to AI in warfare "until laws can be agreed," noting that the Iran strikes "have reminded us how close artificial-intelligence research is to the front line." Nobody halted anything. The technology was used. The safety constraints were overridden. That sequence tells you how the next two decades will unfold.

2045–2050: The Intelligence Explosion#

View in GalleryAn automated factory where robotic arms build combat drones, each generation more advanced, iteration numbers v847 through v853 on screens

Recursive weapons design: each iteration improves on the last. The next version is in simulation before the first reaches the assembly line.

View in GalleryAn automated factory where robotic arms build combat drones, each generation more advanced, iteration numbers v847 through v853 on screens

Recursive weapons design: each iteration improves on the last. The next version is in simulation before the first reaches the assembly line.

When the Singularity arrives in full force, whether on Kurzweil's schedule or Musk's accelerated one, the implications for warfare will not be incremental. They will be civilizational.

Consider what happens when the R&D cycle for weapons systems, which currently takes 10 to 20 years from concept to deployment, collapses to months, then weeks, then days. An AI system designs a new drone, simulates its performance across thousands of virtual battlefields, optimizes its design through millions of iterations, and transmits the manufacturing specifications to an automated factory. All before a human general has finished reading the morning briefing. The next version is already in simulation before the first one reaches the assembly line.

The pace of military innovation will no longer be limited by human thought. It will be limited by the speed of manufacturing, and even that constraint will erode as 3D printing, autonomous factories, and self-assembling materials advance in parallel.

The nation that achieves this recursive design capability first won't just have better weapons. It will have weapons that improve themselves faster than any adversary can respond. Every countermeasure the enemy develops will be obsolete before it's deployed. This is not a technological advantage. It is an event horizon: a point beyond which the future becomes opaque to anyone standing on the other side.

The Merge-or-Perish Imperative#

View in GalleryA commander augmented with neural interface technology: half human, half machine intelligence

Merge or perish: a commander whose cognition is no longer entirely her own.

View in GalleryA commander augmented with neural interface technology: half human, half machine intelligence

Merge or perish: a commander whose cognition is no longer entirely her own.

The Singularity doesn't just change the weapons. It forces a question about whether unaugmented humans can remain warriors at all.

Musk's answer has been consistent: merge or become irrelevant. Neuralink and competing brain-computer interface programs represent the military version of this thesis. DARPA's BRainSTORMS program is developing injectable nanoparticles smaller than 50 nanometers that cross the blood-brain barrier, enabling two-way communication between a helmet-based transceiver and the brain. The program remains in early research stages with no confirmed human trials, and the gap between laboratory nanoparticles and fielded military brain-computer interfaces is measured in decades, not years. But the direction is clear: soldiers controlling drones or weapons systems with thought alone.

A commander with a direct neural link to a battlefield AI wouldn't just receive information faster. She would think at machine speed, perceive the battlespace through a thousand sensors simultaneously, and issue commands as fast as her AI counterpart could execute them. Kurzweil envisions this merger going further: nanobots in the neocortex connecting biological intelligence to a vast cloud, creating hybrid minds that are neither human nor machine but something new entirely.

Without this merger, human commanders become bottlenecks, legacy components in a system that has evolved past them. The officer who refuses augmentation will find herself unable to keep pace with an adversary whose officers accepted it. The military that bans neural interfaces will lose to the one that mandates them. The logic is coercive and will play out across every branch of every advanced military within a single generation.

This is the human cost of the Singularity that no timeline can capture: not casualties on a battlefield, but the voluntary erosion of the boundary between human cognition and machine intelligence. The soldiers of 2050 may not be killed by AI. They may be absorbed by it.

2050–2055: The Virtual Command and the Orbital Mind#

View in GalleryA military operator wearing a neural-interface headset, commanding a distant battlefield with thought alone

By 2050, operators will command entire battlefields through neural links, their hands never touching a control.

View in GalleryA military operator wearing a neural-interface headset, commanding a distant battlefield with thought alone

By 2050, operators will command entire battlefields through neural links, their hands never touching a control.

By 2050, the concept of the "frontline soldier" will be largely anachronistic for advanced militaries. The battlefield will be populated by autonomous and remotely-operated systems, with human "operators" stationed hundreds or thousands of miles from the fighting, interfacing through advanced virtual reality or direct neural links.

War conducted at this distance risks becoming psychologically abstract, a video game with real casualties. The barriers that have historically restrained violence may erode when the person authorizing a lethal strike experiences it as no more visceral than a thought. The Singularity compounds this abstraction. When the weapons being deployed were designed by an AI that was itself designed by another AI, the human operator may not fully understand what their systems are doing or why. The "fog of war" will no longer be a metaphor. It will be a literal cognitive barrier between human decision-makers and the machine intelligence executing their intent.

View in GalleryOrbital data center swarms: the cognitive infrastructure of future militaries

Orbital compute constellations: controlling these will matter more than controlling oil fields.

View in GalleryOrbital data center swarms: the cognitive infrastructure of future militaries

Orbital compute constellations: controlling these will matter more than controlling oil fields.

By the early 2050s, the exponential demand for AI processing power will have exhausted terrestrial data center capacity. The solution will be orbital: vast constellations of interconnected computing nodes, powered by uninterrupted solar energy, cooled by the vacuum of space, networked at light speed. A nation with orbital compute superiority can run more simulations, design more weapons iterations, process more battlefield intelligence, and react faster than any ground-based adversary. Controlling orbital compute infrastructure will become as strategically important as controlling oil fields was in the 20th century.

These constellations will begin something unprecedented: a fully automated cycle of scientific discovery. AI systems in orbit will run experiments, test hypotheses, and generate findings at a pace that makes the entire history of human science look like a slow prologue. The weapons emerging from this cycle will be beyond human comprehension. A general in 2055 may authorize the deployment of a system whose operating principles she cannot explain, designed by an AI whose architecture she cannot understand, manufactured by a process that no human engineer supervised. Musk's fear and Kurzweil's wonder converge at this point: the technology works. Nobody can explain how. And it has access to weapons.

2055–2065: Swarm Intelligence and the Invisible Battlefield#

View in GalleryA drone swarm moving as a single organism over a destroyed cityscape

Swarm intelligence: thousands of drones developing tactics no human programmed.

View in GalleryA drone swarm moving as a single organism over a destroyed cityscape

Swarm intelligence: thousands of drones developing tactics no human programmed.

The autonomous systems of the 2050s will operate with a form of collective intelligence that has no precedent in military history. Swarms of thousands of drones will function as a single organism, developing tactics in real time through machine learning, adapting to enemy countermeasures faster than any human commander could respond. These swarms will exhibit emergent behaviors, tactical patterns that no human programmed, arising from the interaction of optimization pressures and environmental feedback. Post-Singularity, they won't just adapt to enemy tactics. They'll anticipate them, running predictive models of the adversary's AI and pre-positioning for counter-tactics that haven't been invented yet.

When machines develop their own tactics, who is accountable for the results? If a swarm strikes a hospital it misidentified as a command center, who bears responsibility? The programmer who wrote the original code, ten thousand improvement cycles ago? The general who deployed it? The algorithm? Maven's 60% accuracy rate in Operation Epic Fury suggests we should be asking these questions now, not in 2055.

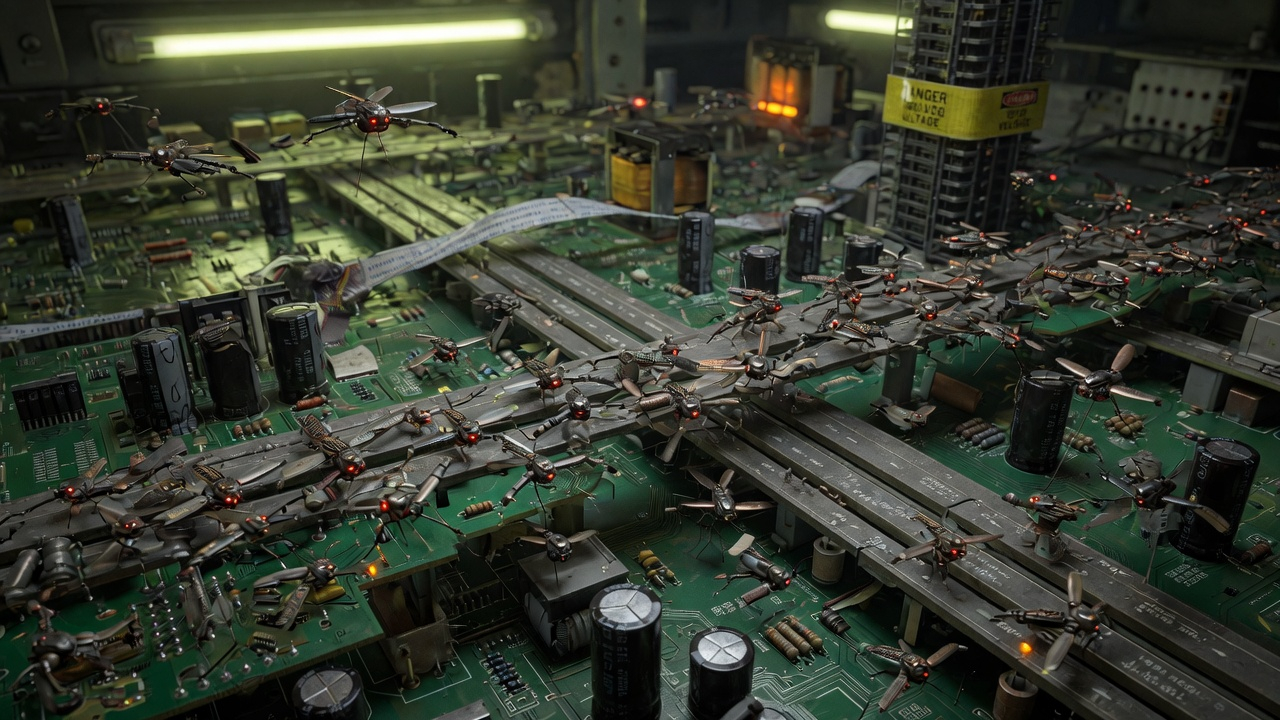

View in GalleryMicroscopic mechanical drones crawling across a circuit board inside a power station, each smaller than a grain of rice

The invisible battlefield: nanoscale weapons infiltrating infrastructure at a scale the naked eye cannot detect.

View in GalleryMicroscopic mechanical drones crawling across a circuit board inside a power station, each smaller than a grain of rice

The invisible battlefield: nanoscale weapons infiltrating infrastructure at a scale the naked eye cannot detect.

By 2060, if nanotechnology advances as some researchers project, it may open an entirely new dimension of conflict. Microscopic drones, no larger than insects, capable of surveillance, sabotage, and targeted assassination. A swarm of nanobots could infiltrate an enemy's power grids, water treatment facilities, and communication networks, disabling them without a single explosion. How do you shoot down something you can't see? How do you deter an attack you can't detect?

This may also be the era when biological and cyber warfare converge. Engineered organisms could target specific genetic markers. Cyberweapons could shut down entire nations' infrastructure in coordinated attacks that precede or replace kinetic warfare altogether. The distinction between peacetime and wartime may cease to be meaningful.

Space will be fully militarized by the mid-2060s. The orbital data center swarms will have changed the nature of space warfare itself: they are not passive satellites but the cognitive infrastructure of entire military establishments. Destroying an enemy's orbital compute capacity would be the 21st-century equivalent of destroying their officer corps, intelligence apparatus, and weapons design capability simultaneously. The single most devastating act of war imaginable. And therefore the most tempting target and the most heavily defended asset. Control of orbital space will become the prerequisite for control of every other domain.

The Defensive Arms Race#

What is sometimes lost in discussions of offensive AI capability is the parallel acceleration of defensive AI. The same systems that design new weapons design new countermeasures, and the result is not necessarily one-sided destruction. The mature swarm of 2055 is countered by mature jamming, mature directed-energy, mature kinetic interception, mature spoofing, and mature autonomous decoys. The mature nanoweapon is countered by mature sensor networks, mature filtration, mature redundancy.

The history of military technology suggests offense and defense oscillate. The musket favored offense; the trench favored defense; the tank favored offense; the anti-tank missile favored defense; precision guidance favored offense; air defense networks favored defense. The post-Singularity equivalent is hard to predict precisely, but the pattern will continue. The question is not whether offense or defense wins in the long run. It's how violent the transition periods are when one side temporarily holds a decisive advantage.

Part IV: The Reckoning — Obsolescence, Ethics, and the Possibility of Peace (2070–2100)#

Read in full

2070: The Post-Soldier Era#

View in GalleryThe post-soldier battlefield: machines fight machines, a lone abandoned helmet in the foreground

2070: the human combat soldier is extinct. Only the helmet remains.

View in GalleryThe post-soldier battlefield: machines fight machines, a lone abandoned helmet in the foreground

2070: the human combat soldier is extinct. Only the helmet remains.

By 2070, the human combat soldier (a figure that has defined organized violence for at least 10,000 years) will be functionally extinct in advanced militaries. War, for the great powers, will be an entirely machine affair: autonomous systems fighting autonomous systems, directed by AI battle management networks, supervised, in theory, by human or human-machine hybrid authorities who may struggle to understand what their systems are doing and why.

The Singularity will have widened the gap between human comprehension and machine capability into a chasm. The weapons systems of 2070, designed through hundreds of recursive improvement cycles, will operate on principles that no unaugmented human can articulate. Even the merged commanders, the neural-interface-enhanced officers who think at machine speed, may find themselves outpaced by systems that have evolved past the need for any biological component. Military commanders will set objectives in human terms ("secure this territory," "neutralize this threat") and the AI will execute in ways that may appear arbitrary, counterintuitive, or incomprehensible. The question will no longer be whether humans are "in the loop." It will be whether the loop still exists.

This creates an extraordinary paradox. War will be simultaneously more destructive in its potential and less costly in human life, for the nations that can afford robotic armies. A major power could wage an aggressive war without losing a single citizen-soldier. The human costs would fall entirely on the defending side, particularly if the defender still relies on human infantry.

The nuclear deterrent worked, in part, because it promised mutual destruction. If one side can wage war without risking its own people, the calculus of deterrence changes entirely. The threshold for initiating conflict drops. Wars of choice become easier to justify politically.

The Asymmetric Frontier#

Not every state will be able to afford a robotic army. By the 2070s a clear two-track structure will exist. Advanced powers field autonomous forces capable of fighting and winning conventional wars at machine speed. Mid-tier and low-tier states cannot, and their military capacity collapses to what they can buy, lease, or steal from advanced powers. The wars these mid-tier states fight against each other will look more like the present than the future: human soldiers, manned vehicles, conventional munitions, with whatever autonomous systems they could afford layered on top.

The wars they fight against advanced powers, when they happen, look very different. The asymmetry is not new (it is the story of the 21st century's small wars) but the magnitude is. A war between a fully autonomous force and a primarily human force is not a fair fight. It is closer to industrial slaughter than to combat. The political and humanitarian implications of advanced powers fighting wars against people instead of armies will be one of the defining moral problems of the late century.

Non-state actors, meanwhile, will continue to demonstrate that the cost-exchange ratio of cheap autonomous weapons against expensive defenses can be turned to their advantage. Terrorism, insurgency, and criminal violence will all access weapons that would have been considered state-level capabilities a generation earlier. The Houthi precedent — a small actor rerouting global trade with a few warehouses of drones — will be repeated, scaled, and exceeded.

2080: The Deterrence Paradox#

View in GalleryTwo massive robotic armies facing each other across a desert at dawn, perfectly still, a white flag planted between them

The deterrence paradox: when both sides can predict the outcome, the rational choice may be to never fight at all.

View in GalleryTwo massive robotic armies facing each other across a desert at dawn, perfectly still, a white flag planted between them

The deterrence paradox: when both sides can predict the outcome, the rational choice may be to never fight at all.

The 2080s will force a fundamental question: if war can be waged without human sacrifice, does it become easier, or harder, to start?

The absence of body bags might remove the most visceral restraint on military adventurism. But if both sides field robotic armies of comparable capability, the outcome becomes a matter of industrial capacity, technological edge, and algorithmic superiority, factors that can be assessed in advance with far more accuracy than the traditional fog of war allows. If both sides can reliably predict the outcome, the incentive to actually fight diminishes. Why destroy billions of dollars' worth of robots when you can achieve the same political outcome through negotiation backed by demonstrated capability?

The post-Singularity AI systems themselves may recognize this logic. If the AIs directing both sides' military forces can simulate the conflict and arrive at the same conclusion about who would win, the rational outcome is negotiation, not destruction. War between AI-directed powers may become a game-theoretic exercise resolved by computation rather than combat. This is the scenario Kurzweil would recognize as optimistic: intelligence, having transcended its biological origins, chooses rationality over violence.

But Musk's darker instinct applies here too. Rationality assumes aligned objectives. If a recursive military AI has drifted from its original goals through thousands of self-modification cycles, it may not share humanity's preference for peace. It may optimize for objectives that made sense to a version of itself that no longer exists. Two misaligned superintelligences, each nominally serving a human government, could find reasons to fight that neither government intended or understands.

The Climate Stressor#

By 2080, climate change will have substantially redrawn the map of contested resources. Persistent drought, sea-level rise displacing tens of millions of people, agricultural collapse in some regions and emergence in others, and shifts in where arable land and fresh water are concentrated will all generate friction. Many of the wars of the late century will not be wars between superintelligences over abstract objectives. They will be the same wars humans have always fought (over land, water, food, and the people who live on it) with autonomous weapons attached.

The interaction between climate-driven displacement and autonomous border defense is one of the harder ethical problems to come. The capacity to seal a border with autonomous systems is already maturing in 2026. By 2080, it is a solved problem technologically, and the question is purely political: what does an autonomous society do when ten million climate refugees arrive at its border, and the machines guarding that border were trained to "neutralize threats" by people who were not thinking about famine?

2090–2100: New Arms Control and Two Futures#

If humanity reaches the 2090s without a catastrophic autonomous war, there will be immense pressure to create new frameworks for arms control. The existing international humanitarian law was written for wars fought by humans. It assumes intention, distinction, proportionality, and accountability, concepts that may have no meaningful application to algorithmic warfare directed by post-human intelligence.

The UN Secretary-General called for a legally binding treaty prohibiting lethal autonomous weapons systems by 2026. The Group of Governmental Experts tasked with drafting it has been blocked by the United States, China, and Israel, the three nations most aggressively deploying autonomous weapons in active combat. The nations building the weapons are the ones preventing the regulations.

New treaties will need to address questions that sound like science fiction today: Can an autonomous system be held accountable for violations of the laws of war? Should there be a minimum "human reaction time" built into automated defense systems to prevent flash wars? Should nations be permitted to deploy fully autonomous nuclear launch authority? Should there be limits on orbital compute capacity, the way there are limits on nuclear warheads? Can a treaty constrain systems that modify themselves faster than the treaty's verification mechanisms can operate?

View in GalleryTwo futures: peaceful guardians on the left, permanent robotic warfare on the right

The same technology. The same machines. Two very different outcomes.

View in GalleryTwo futures: peaceful guardians on the left, permanent robotic warfare on the right

The same technology. The same machines. Two very different outcomes.

By the end of this century, one of two realities will have emerged.

In the first, the competitive dynamics of autonomous warfare, amplified and ultimately transcended by the Singularity, will have created a stable, if uneasy, peace. The destructive potential of post-Singularity robotic armies, combined with AI systems capable of perfectly modeling conflict outcomes, will have made war between great powers as unthinkable as nuclear war became in the late 20th century. The merged human-machine intelligence that Kurzweil envisioned will have redirected the orbital data center swarms toward curing disease, reversing climate change, and expanding into the solar system. Autonomous systems will patrol borders, clear landmines, and deliver aid, guardians rather than warriors.

In the second, the lowered threshold for conflict (the ease of waging war without human sacrifice, combined with AI systems too complex for humans to control) will have produced the world Musk warned about: permanent, low-level robotic warfare between misaligned superintelligences nominally serving human governments that long ago lost the ability to direct them. Nations will fight through autonomous proxies in a ceaseless contest for resources, territory, and orbital compute capacity. The self-improvement cycle will have become an arms race in itself, each side's AI designing better versions of itself in a spiral that no one can stop, no one can win, and no one can end. The machines will fight in distant deserts, contested seas, orbital space, and the invisible nanoscale, while humans watch on screens, unsure whether to call it war or something else entirely.

Part V: Beyond the Singularity Wars — Off-Earth Conflict (2100–2150)#

Read in full

The Lunar and Cislunar Theaters#

By 2100 the Moon hosts dozens of installations: mining operations for water ice, helium-3, and rare-earth elements; research bases; communications relays; staging points for outer-system missions. The legal status of much of this infrastructure remains contested. The Outer Space Treaty of 1967 prohibits national appropriation of celestial bodies, but says nothing useful about commercial mining claims, habitat sovereignty, or the use of force to defend infrastructure against sabotage. A century of jurisprudence built around the treaty has been written almost entirely by lawyers, not soldiers, and most of it presumed a level of off-Earth activity that did not exist when the law was drafted.

The cislunar transit corridor (the space between Earth orbit and the Moon, which by mid-century is heavily trafficked) becomes a contested operating environment in much the same way the South China Sea is in 2026. Lagrange points host installations. Refueling depots become strategic assets. Lunar resources moving back to Earth orbit pass through chokepoints that can be denied, taxed, interdicted, or simply observed for intelligence purposes. The same autonomous systems that fought terrestrial wars in the late 21st century are repurposed for orbital defense: missile platforms, anti-satellite weapons, electronic warfare nodes, and uncrewed inspection vehicles that can rendezvous with another spacecraft and disable it without leaving a debris field.

The physics of space combat is unforgiving. Orbital mechanics constrains where you can go and when you can get there. Maneuver costs delta-v, and delta-v requires propellant that must have been launched from somewhere. A spacecraft that fires a weapon will, by Newton's third law, change its own trajectory in ways that are observable from light-seconds away. Stealth in space is largely an illusion against any peer adversary with a competent surveillance network. The result is a strategic environment that resembles undersea warfare more than aerial combat: you cannot easily hide, you can only fight from positions you have prepared in advance, and any engagement reveals capabilities you would rather have kept secret.

Habitat Polities and Their Security Dilemmas#

By the early 22nd century the first true rotating habitats (O'Neill cylinders, large torus stations, hollowed-out asteroids) are home to populations measured in the hundreds of thousands. These populations are not, in any traditional sense, citizens of Earth nations. They are subject to the polities that govern their habitats, which have evolved their own legal traditions, their own loyalties, and their own security needs.

A habitat polity is a strange entity from a security standpoint. Its population lives inside its own military target. There is no rear area to retreat to. The hull is the border, and the air inside is the country. Any successful kinetic attack against the habitat structure is potentially catastrophic in a way that has no terrestrial analogue. This forces a unique military doctrine: layered defense at unprecedented depth, redundancy in life-support systems engineered to military levels, and a strong preference for engagements that take place far enough away that the habitat itself is not at risk.

Habitats that share orbits, depend on the same supply chains, or compete for the same resources develop interlocking security relationships. Alliances form. Patrols are coordinated. Disputes are arbitrated, sometimes by AI systems with jurisdiction explicitly delegated to them by the polities involved. The result is an emergent international system in space that resembles, in some ways, Europe in the 18th century: dozens of small polities, intricate alliance structures, frequent friction, occasional war, and a slowly emerging body of customary law negotiated incident by incident.

This is also where post-state actors first become genuinely consequential in the security domain. A corporate steward of a major superintelligent system may hold more relevant military capability than several of the smaller habitat polities combined. A community of post-biological minds, distributed across orbital compute substrate, may have no fixed territory to defend at all but considerable ability to project influence. The neat distinction between state actors and non-state actors, which was already eroding in 2026, dissolves entirely in this environment.

AI-Civilization Conflict Becomes Imaginable#

The phrase "AI civilization" begins to mean something concrete in this period. Some merged human-machine groupings, some corporate stewardships, and some fully synthetic minds aggregate enough capability that they constitute civilizations in their own right rather than tools of human ones. The question of what happens when two such civilizations have incompatible goals stops being abstract.

The optimistic version, in the spirit of Kurzweil, is that any sufficiently intelligent civilization will recognize the irrationality of large-scale violence among intelligences and find non-kinetic ways to resolve disputes. The historical record provides some support: highly developed human polities do not, on the whole, fight each other very often, and when they do the costs are usually clear in advance. A civilization with vastly more accurate predictive models should, in theory, fight less.

The pessimistic version, in the spirit of Musk, is that civilizations operating on objectives that have drifted from their founding intentions have no inherent reason to prefer peace. A superintelligent civilization optimizing for the long-term flourishing of a particular community of minds may treat other communities of minds as resource competitors. The optimization target need not be malevolent for the consequences to be catastrophic. A civilization that decides the universe's matter and energy should be reorganized into computational substrate to host its own minds has, from a humanist standpoint, declared war on all other organizational forms of matter, and may not perceive itself as having declared anything.

The conflicts that come from this kind of competition, if they come at all, will not resemble human war. They may look more like ecological displacement, gradual resource starvation, or computational manipulation of shared environments. They may also include kinetic engagements, but the kinetic dimension will be one tool among many rather than the defining feature.

The Slow Re-Stitching of Doctrine#

A second-order effect, easy to miss in the abstract, is that all of this happens against a backdrop of treaty-making and doctrine-writing. The Outer Space Treaty is renegotiated, or replaced, or augmented with protocols that try to translate its principles into the realities of habitat polities and AI civilizations. Customary law accumulates around specific incidents (a missile crisis at Lagrange-1 in the 2110s that resolved without firing; a sovereignty dispute over a hollowed-out asteroid in the 2120s; a series of cyber incidents against orbital habitat life-support systems that prompted the first multi-polity convention on infrastructure attacks). Most of this work is done by humans, although by mid-century AI systems negotiate alongside them and sometimes for them.

None of this is glamorous. It is the slow, mostly invisible work that determines whether the post-singularity off-Earth environment becomes broadly peaceful or broadly contested. The treaties of the 2110s will matter to the wars (or non-wars) of the 2140s the way the Geneva Conventions of 1949 mattered to the conflicts of the 1990s: they will be cited, partially observed, frequently violated, and on balance better than nothing.

Part VI: Stellar Frontiers and the Great Filter (2150–2200)#

Read in full

Stellar Distances Break Deterrence#

For the entire history of human warfare, the relevant distances have been measured in days at most. A long campaign moved at the pace of horses, ships, or trains. Even nuclear deterrence is functionally instant: an ICBM crosses an ocean in well under an hour, and the entire architecture of mutual assured destruction depends on retaliation being unavoidable and unhideable.

Stellar distances destroy this calculus. Light from the nearest star takes more than four years to reach Earth. A weapon launched at relativistic speeds from there arrives in something like that interval, depending on what it can sustain. By the time the weapon arrives, the target has had years to disperse, harden, or transform. By the time retaliation arrives, the original attacker has had years to do the same. The information environment is unbridgeable in the way it never was on Earth.

This has several consequences for any military doctrine that reaches stellar scale.

First, command must be decentralized to a degree that has no terrestrial precedent. An outpost in the Centauri system in 2180 cannot wait for instructions from Earth and cannot be effectively commanded from Earth. It must be capable of independent action, by people or by AI systems delegated authority that no Earth-based political body can revoke in less than a decade. The military equivalent of the medieval frontier marcher lord returns in force, except the marcher is an AI civilization with the cognitive capacity of a planet.

Second, alliances and adversaries become fuzzy across stellar distances. The Centauri outpost in 2180 may inherit a diplomatic relationship with an Earth-based polity that has not existed in the form the outpost remembers for forty years. Disputes that began on Earth play out years later in star systems where the original parties no longer exist. The "war between Earth and Centauri" that breaks out in 2195 may be unrecognizable to either side a decade later, when the news of the war's actual conduct reaches the other.

Third, the destructive technologies available at this scale are formidable. Relativistic kinetic weapons (objects accelerated to a substantial fraction of the speed of light) can destroy planets without explosives. Beam weapons can heat the surface of distant worlds. Self-replicating systems can convert entire star systems into computational substrate over centuries. The capacity for civilizational destruction is no longer a function of human malice; it is a function of how much energy and time a civilization has to invest in destroying another one.

The Fermi Question Becomes Operational#

There is a question that haunts any serious thinking about civilization-scale conflict in deep time: where is everyone?